Build Log: The 94 Trillion Transistor NAS

Or in other words - the nerd dream of an all-SSD high capacity NAS.

But to explain the headline first, this is just adding up all the things that you could consider to be individual transistors. Of course, the Threadripper CPU itself contains over 24 billion transistors, but that pales in comparison to the RAM - each bit consisting of a capacitor and a transistor. And then you get to SSDs, where you could say each NAND cell is a charge-trap cell and a transistor in the control gate, you quickly start counting in trillions.

Please keep in mind I usually don't do build logs, I might do this more in the future, but this first take is more of an info dump, thought process explanation, and some bits of info or things to look out for that others interested in something similar may find handy.

Acquiring the SSDs

Given my main objective was to have SSDs as bulk storage, mainly so I don't have to worry about speed bottlenecks, it was quite simple - price was the main factor.

SSDs are (at the time of writing) nowhere near the price of HDDs per-capacity, at roughly 4x the price per bit,

or in other words a 3.84 TB SSD costs similar money to a 16 TB HDD.

This means going with brand new drives from a retailer was not an option if I wanted to pull this off with any reasonable storage capacity while keeping both kidneys.

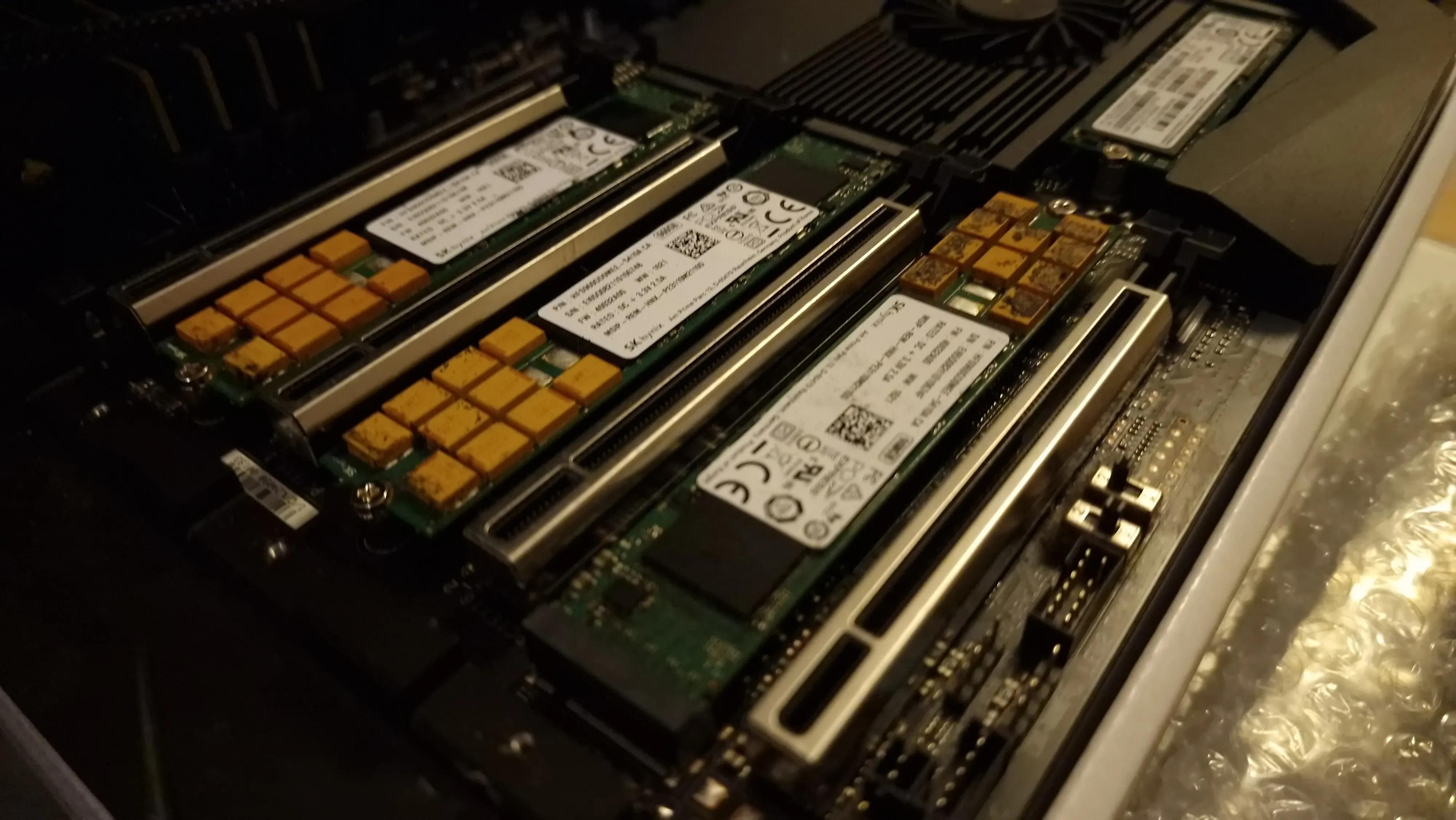

After almost 3 years of passively checking SSD prices on both the new and second hand market, I struck a deal I don't think I will see again in a very long time - supposedly a set of Samsung PM983 enterprise 3.84 TB NVMe SSDs, new and for right around 145€ per drive with a heatsink included - local seller too, so no 21% import tax!

Hesitant, as you should be with deals like this, I waited a bit and talked to others about it. In the meantime a relative of mine pitched in with a request for 2 drives for himself,

so after about 2 weeks of deciding whether to finally jump onto the idea of an all-SSD NAS, I sent an email asking for 6 of these supposedly new drives,

while also saying I don't need a heatsink and whether that could lower the price.

In the end I was able to get a bulk and heatsink-less discount to lower the price to an insane 120€ per drive.

A couple days later the drives arrived, very nicely packaged. I immediately threw them onto a test bench and started running CrystalDiskMark to test performance characteristics and H2testw to check whether they really are 3.84 TB. Both tests came out exactly as one would expect for a 3.84 TB Samsung PM983, and with SMART saying the drives have near zero usage, I was very pleased. Sure, you can erase or modify SMART info, but given these enterprise drives survive a lot of TBW and that I would be running them in RAID, I didn't particularly care about that.

This all happened just before Christmas 2023, between that, deciding whether to use my existing Threadripper system for it and not being quite set on going down to 10 TB of usable storage once RAID is accounted for, I ended up not doing anything with the drives until January. That is when I decided to buy 4 more from the same seller as the listing went back up, now being set on using the Threadripper due to the high PCIe lane requirements of 8 SSDs plus other devices, I began getting the remaining components.

Side-note about the NVMe carrier cards, in the picture the first one is the GIGABYTE gen4 AIC that was bundled with the Threadripper motherboard, I originally intended to use that but swapped to a second brand new Asus card due to temperatures. The Asus card runs the power-hungry Samsung PM983 drives at around 60-65°C controller temp while the GIGABYTE one often went near 80°C, both are perfectly fine, but I did not want to have such a disparity between the two halves of the SSD array. Furthermore I'm unsure whether this is due to the (imo) better design of the cheaper Asus card or whether the GIGABYTE one is simply old and the pads are toast.

To rip threads or to save power?

With 8 bulk storage SSDs, some boot SSDs and a fast 40GbE network card as my minimum requirements, a lot of DIY desktops were ruled out quickly. AM4, AM5 or older Intel systems simply lack the expansion options to handle such a usecase. This left me with only two reasonable options, either re-use my existing Threadripper and get more value out of it or buy an all new Intel LGA1700 system to save power.

The Threadripper case

With this approach I need almost no new hardware, just a few "dumb" PCIe NVMe carrier cards and a network card - which I would get for the LGA1700 system anyway. It also re-uses my existing Threadripper system instead of having to sell it for next to nothing - seriously, you're basically not selling these for more than 1000€ as a full platform combo, I can get more value out of it myself!

Actually figuring out how this will all be put together was rather trivial.

The two x16 slots will be configured in x4x4x4x4 bifurcation mode and populated with quad-NVMe cards. The boot pool for the system will be three SK Hynix PE3110 M.2 NVMe SSDs, these are also enterprise-grade

SSDs which I got for the original NAS in 2020, just so I could have a small fast SSD pool alongside the HDDs. As these are enterprise drives, they are also 110mm long, but luckily GIGABYTE has a habit of putting M.2 22110

slots on a lot of their motherboards, this TRX40 AORUS XTREME being one of them, so all three drives can be nicely put directly on the motherboard. Then for networking I can just get a cheap

Mellanox ConnectX-3 Pro from ebay and put it in one of the x8 slots. As for SATA, the motherboard has 10 SATA ports - 8 from the chipset and 2 from a dedicated ASMedia controller - quite handy for VM passthrough!

The LGA1700 case

This would certainly be a lower power setup, but it also comes with a lot of caveats. While Intel's LGA1700 platform provides the most PCIe IO in the consumer desktop segment, it is still far from enough. Which means I would need to use expensive PCIe switch cards to put 4 NVMe drives into a x8 slot. I would also want ECC memory, which means getting a W680 chipset based motherboard and new DDR5 memory.All in all this means a lot of money and no possibility for future expansion as the system will already be maxed out, all for relatively small electricity savings.

Regardless of the downsides, I decided to spec it out. The obvious (and practically only) choice of motherboard is the Asus Pro WS W680-ACE IPMI, this gives me ECC support and remote management, but crucially has enough PCIe slots to do all of this, albeit with some adapter jank.

Firstly, the two main x8 slots would run bifurcated into x8x8, each feeding a PCIe switch card with 4 SSDs, that takes care of the storage pool. The triplet of SK Hynix PE3110 SSDs is tricky, one of those can go into the 22110-length slot on the motherboard while the other two would occupy the x4 slots on riser cards. Then follows the networking, I can't just use the cheap Mellanox ConnectX-3 Pro 40GbE adapter like with the Threadripper, since it requires 8 PCIe gen3 lanes to get full bandwidth, instead I would have to opt for the much more expensive 100GbE Mellanox ConnectX-6, which is PCIe gen4, meaning it will be able to achieve 40GbE on just x4 lanes. This would be on an M.2 -> slot adapter from ebay or aliexpress. Lastly I would like more SATA ports, so the last M.2 would get one of those cheap 4 or 5 port M.2 AHCI controllers.

TCO calculations and ROI

Total Cost of Ownership is the proper way to calculate how expensive a server or NAS will be, since up-front cost is only a part of the equation - runtime energy cost matters too!

It is very easy to get caught up in up-front costs, but especially where I live, electricity isn't the cheapest, so taking it into account is important, especially for a machine that will be running 24/7.

This is also why I often joke in chats that the RTX 4080 is cheaper than the RX 7900 XTX - with my usage pattern, it is, but I won't go into the math here (and especially now with the price-cut RTX 4080 not-so-Super).

The following comparison table uses CZK prices with only the final calculations being converted to EUR based on conversion rate at the time of writing.

| Component | Threadripper | LGA1700 |

|---|---|---|

| CPU | AMD Ryzen Threadripper 3960X: 0 CZK | Intel Core i7-14700: 10990 CZK |

| RAM | 256GB ECC DDR4-3200: 0 CZK | 128GB ECC DDR5-4800: 16000 CZK |

| Motherboard | GIGABYTE TRX40 AORUS XTREME: 0 CZK | Asus Pro WS W680-ACE IPMI: 14590 CZK |

| Boot Drives | 3x SK Hynix PE3110: 0 CZK | 3x SK Hynix PE3110: 0 CZK |

| Data Drives | 8x Samsung PM983: 24000 CZK | 8x Samsung PM983: 24000 CZK |

| Networking | Mellanox ConnectX-3 Pro 40GbE: 2200 CZK | Mellanox ConnectX-6: 7000 CZK |

| Drive Carriers | 2x Asus gen4 AIC: 2800 CZK | 2x ebay PLX card: 8000 CZK |

| Power Supply | Seasonic Focus PX 650: 2300 CZK | Seasonic Focus PX 650: 2300 CZK |

| Chassis | Fractal Design Define 7 XL: 0 CZK | Fractal Design Define 7 XL: 0 CZK |

| Total cost of upgrades | 31300 CZK (1245€) | 82880 CZK (3300€) |

| Estimated power usage | 200W | 140W |

Let's first tackle the power consumption estimate, I did some testing with swapping various components in and out of the test system and found that the 8 Samsung SSDs consume roughly 50W from the wall, with the 3 SK Hynix drives adding to another 20W.

This means that most of the power consumption will be going into storage and networking, so the power savings from the Intel platform become rather small, even then I figured 60W for a mostly-idle W680 system is about right, judging by my own B760 i5-12400F test system idling around 40W.

This puts the LGA1700 total at 140W.

The Threadripper was far easier to estimate as I already have it, so I just plugged it in and powered it on.

Amusingly enough, here it becomes a case of the more power hungry option being far cheaper as the power consumption difference simply can't outweigh the up-front cost difference. As a difference of 60W with my current electricity cost of roughly 7 CZK (0.28€) per kWh means it would take 14 YEARS to pay off the 51580 CZK of extra up-front cost.

Now of course, the Threadripper wasn't actually free, but it was bought for a different use-case, I daily-drove the thing as a desktop for almost 3 years, so in a sense it has paid itself off and it is effectively free for this new project, or at least that is how I'm counting it, since otherwise it would either sit unused or I would have to sell it, which even with optimistic sale estimates merely cuts the LGA1700 ROI to 7 years (I tried to sell it a couple times before).

Finalizing the specs

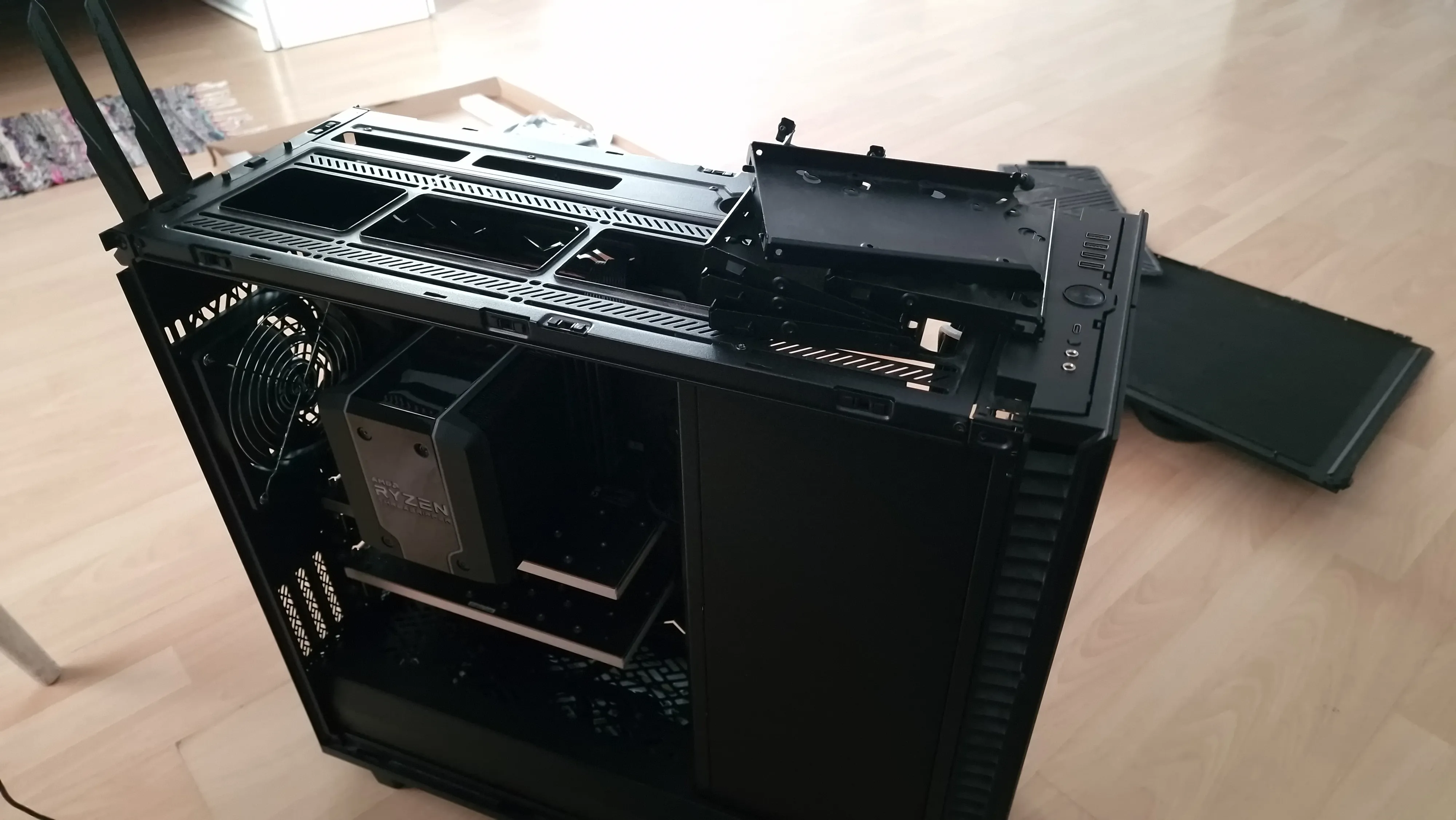

With it being quite clear that the Threadripper system is the way to go, I ordered the remaining parts and also thought of some more nice-to-haves I might want.

One addition was an extra 960 GB Corsair Force MP510 NVMe, I utilized the last onboard M.2 slot for it. This drive will be the fast scratch drive for a sandbox VM I plan to deploy for random testing, one-off overnight workloads and to have a "big machine" at hand if needed.

Then I thought of how to use the existing HDDs from the soon-old NAS, I originally wanted an all-SSD NAS, but why throw away perfectly fine HDDs. As the market value of 8 TB drives has dropped massively since I bought them, I didn't really feel like reselling them, instead I asked a long-time friend of mine if he would have a use for them, we settled on him renting them for basically power costs and a little extra, as he wanted a european backup mirror.

Lastly comes the last-minute addition of two old 1 TB laptop HDDs, I will be passing the chipset AHCI controller with the 8 TB HDDs to a VM for my friend, but I still have that dedicated onboard ASMedia controller with 2 SATA ports, so I decided to use it. These drives will be for nothing more than to have a local backup of the most critical data on a second type of media other than SSDs.

I admit this isn't my best cable management job, but the case is huge and a lot of the cables barely reach, though I definitely should have dusted it off before taking the photos.

Also the keen-eyed among you probably noticed the 3 HDDs are different, yes, these are just placeholders for the 3x 8 TB HDDs as at the time of assembly, those were still running in the previous NAS.

Finally, the system is complete, now it is time to make it efficient...

Power tuning

As already mentioned, power consumption is very important for a 24/7 appliance, so once the build was done I delved into the BIOS and started tuning it for low power operation and stability.

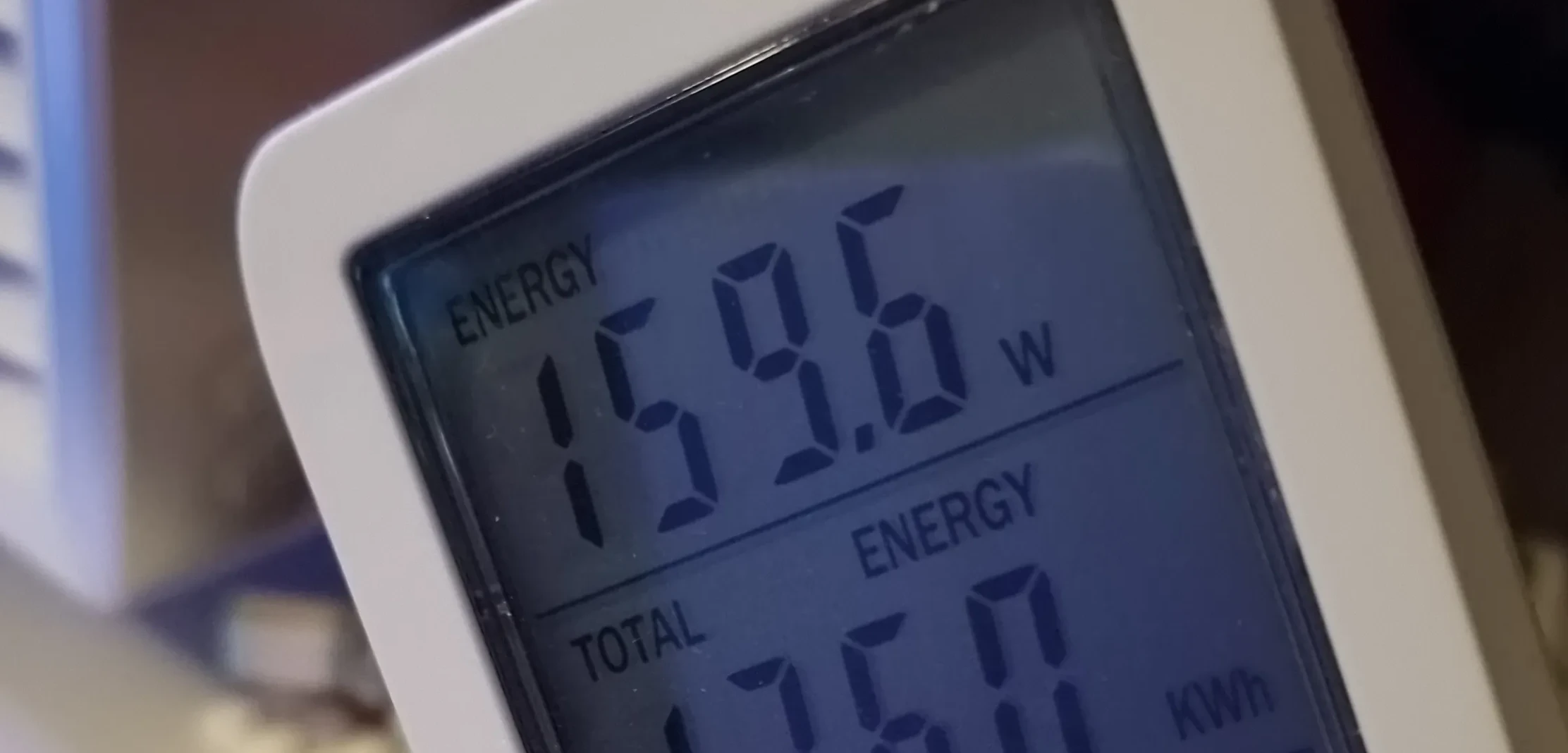

For brevity, I will not be describing all the terms used here, such as FCLK, IOD, CCD, etc. I will not be exploring the details of the chiplet packaging AMD uses. All wattage numbers are the differences I saw at the wall using a power meter, which includes all PSU, VRM, cable and PCB losses.

Boost and voltage (in)stability

The first thing I had to tackle was fixing a huge issue that plagued early Threadripper 3000 CPUs, including mine - they are not fully stable at stock voltage.I never encountered CPU instability in any actual workload I ran for the whole time of using this system as a desktop, but I do know one core crashes within a few hours of prime95, which is unacceptable for a system slated to be running critical infrastructure. The core that crashes is in the far CCDs, across the IO die away from the VRM, so my best guess is that these early chips are pushed a bit too hard out of the box and the voltage droop across the gigantic Threadripper package makes them crash. I non-scientifically tested this theory by pinning prime95 to only the far CCDs and leaving the near CCDs idle, the result was stable for over a day, which makes sense as the voltage didn't drop as much before it got to the far CCDs due to half of the CPU being idle.

Either way, the fix for this is obvious - higher voltage for a given frequency. But I did not want to push the CPU any higher above 1.4V than it already did by itself when trying to boost, so I thought about it and ended up entirely disabling core performance boost,

effectively forcing the CPU to sit at base clock all the time, which for this 24-core Threadripper is still a very respectable 3.8 GHz, plenty enough for server duty. Then I proceeded to up the voltage offset until the CPU was stable on multi-hour prime95 stress tests, settling on +85mV in the end.

Due to the 3.8 GHz base clock lock, this actually results in an all-core voltage of only 1.2V, which is lower than the 1.3V the chip does by itself as it tries to boost to 4 GHz.

Somewhat unexpectedly for me, this actually resulted in a low-load (1-2% CPU usage) power saving of almost 20W, maybe AMD should actually try to be less aggressive with their boost algorithm...

Memory speed and FCLK

Next big thing on the list was the RAM speed and Infinity Fabric speed. I'm running 8 dual-rank DIMMs, which by AMD spec is supported at up to 2666 Mbps, but this "gamer" motherboard believes official specs are merely suggestions and runs the RAM at 3200 Mbps (which is what the RAM is rated for). Originally I didn't mind this too much, but I decided to check what happens if I drop it to 2666 and thus a FCLK of 1333, the result was a shocking 30W drop in low-load power consumption - lesson learnt: big IO chiplet is power hungry.As peak performance is not the goal here, I decided to leave it at 2666/1333 for the power savings.

Don't touch DF states!

After seeing the big drop in power from lowering the FCLK, I tried enabling "DF C states", or Data Fabric states, this allows the IO die to downclock when idle. Alongside that I also had to explicitly disable SOC_OC and LN2 modes. Finally I saw the IO die downclock, but then the issues started popping up.As it turns out, AMD has good reason to disable IOD power saving by default, the SATA, USB and sometimes even PCIe devices randomly started locking up or disconnecting from the system whenever the fabric downclocked!

Secondly, it didn't actually matter, the power savings were there - with almost an extra 15W shaved off compared to a flat 1333 FCLK - but it jumped back up at the slightest hint of load, even a single idling Windows VM was enough to nullify it, so I ended up reverting these settings back to defaults.

VRM phase shedding

Turns out VRM phase shedding - which is the technique of turning off VRM power stages when the power demand is low - is entirely disabled by default on this motherboard. It took me quite some time to find it in the BIOS, but after setting VRM power management to "low performance" (what a name...), it dropped almost 20W of low-load power at the wall, which seems really high but it is what I observed. Presumably frequency ramping might be slower with more aggressive phase shedding, but I did not observe any appreciable performance losses, so I kept it this way.

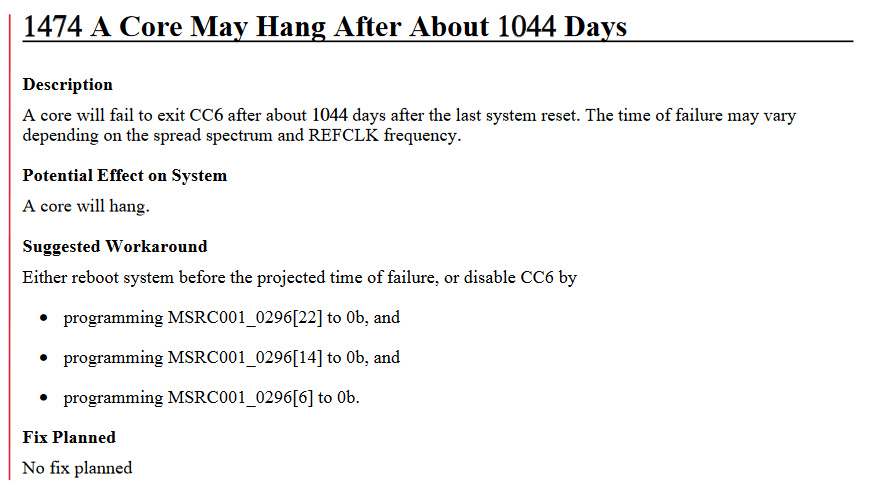

Core C-States

The last power saving optimization I implemented was enabling CPU C6 states, this is a core deep-sleep state that is disabled by default. I don't think there is a single Ryzen product with C6 states that don't carry some form of bug attached with them, but for Zen 2 CPUs this bug comes in the form of a hard system lock-up after 1044 days of uptime - the cores will fail to exit C6 state after that.

As I don't foresee myself ever getting near 3 years of uptime on this thing, this is a non-issue for me. C6 enabled, 25W saved, simple as that.

Power tuning final numbers

All of this power saving reflected in a low-load test workload power consumption reduction from the "all-stock" 250W down to 160W with all the SSDs and PCIe devices installed (but not the HDDs). Quite an incredible power reduction from just turning on a few BIOS settings, of course ignoring some of the attached performance reductions.

Wrapping up

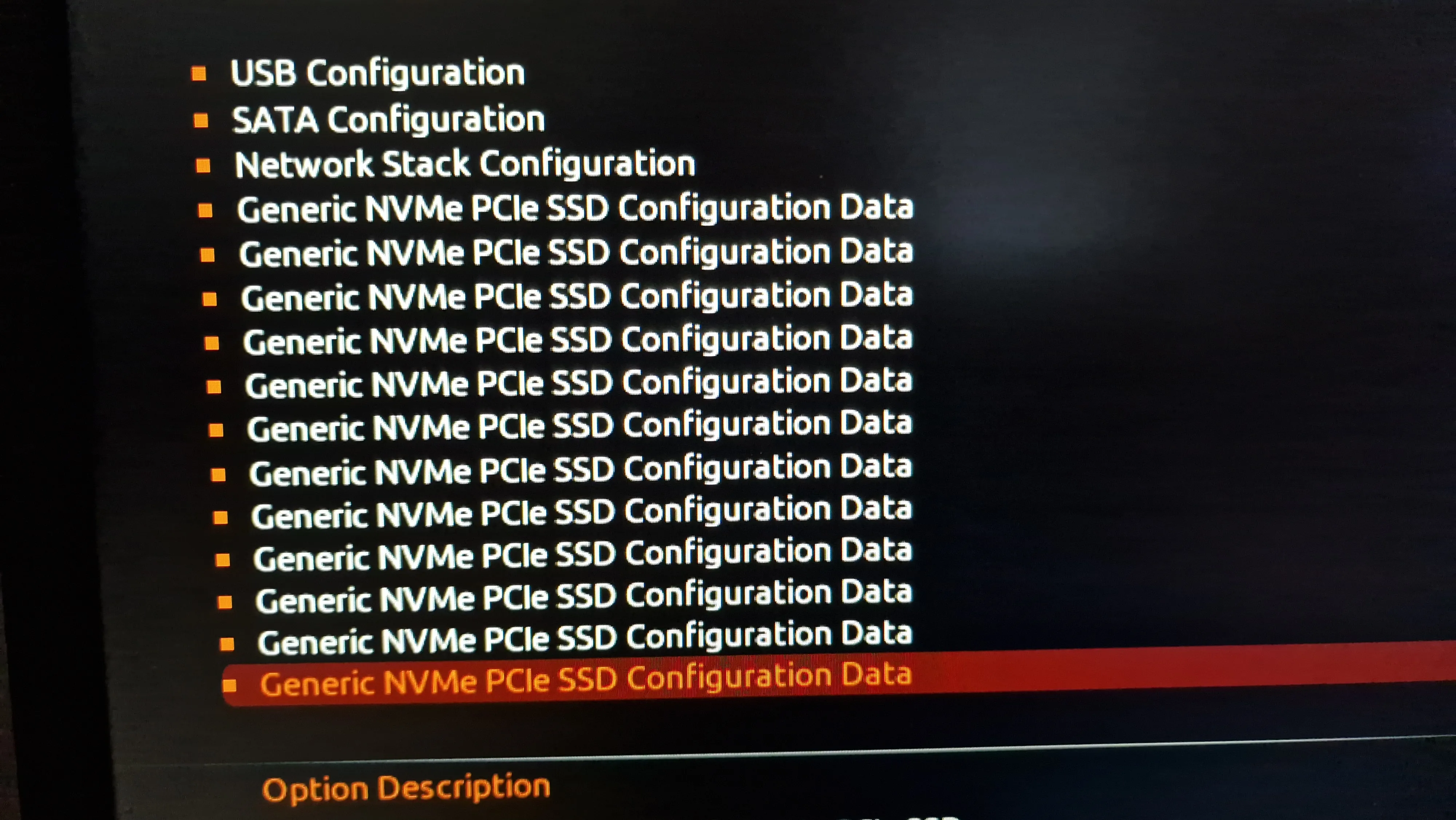

With the system assembled, BIOS configured for low-power (relatively speaking, this is still a Threadripper), it was time to install and configure Proxmox VE, do a quick final burn-in test and start migrating VMs. I won't go into the software side here, that may be for a future article on running Proxmox VE on consumer-grade hardware with "odd usecases", but I will put this amusing BIOS drive listing in here.

- AMD Ryzen Threadripper 3960X

- CoolerMaster Wraithripper

- GIGABYTE TRX40 AORUS XTREME

- 8x 32GB Micron MTA18ASF4G72AZ-3G2B1 DDR4-3200 ECC

- 3x SK Hynix PE3110 960GB NVMe SSD

- 1x Corsair Force MP510 960GB NVMe SSD

- 8x Samsung PM983 3.84TB NVMe SSD

- 2x random 1TB 2.5" 5400rpm SATA HDD

- 3x Toshiba MG06 8TB 7200rpm SATA HDD

- Intel X550 Dual 10GbE (Onboard)

- Mellanox ConnectX-3 Pro 40GbE QSFP+ with Noctua NF-A4x10 PWM

- Seasonic Focus PX 650W

- Fractal Design Define 7 XL + 3x Arctic P14 PST CO

Naturally the power consumption did not stay at a comfy 160W, once the HDDs have been added and all the VMs were migrated over, the system sits more around the original estimate of 200W, which is still quite decent for such a beastly NAS.

Thank you for reading my nonsense rambling and I hope it was at least enjoyable if not useful!